FICO® Decisions Blog

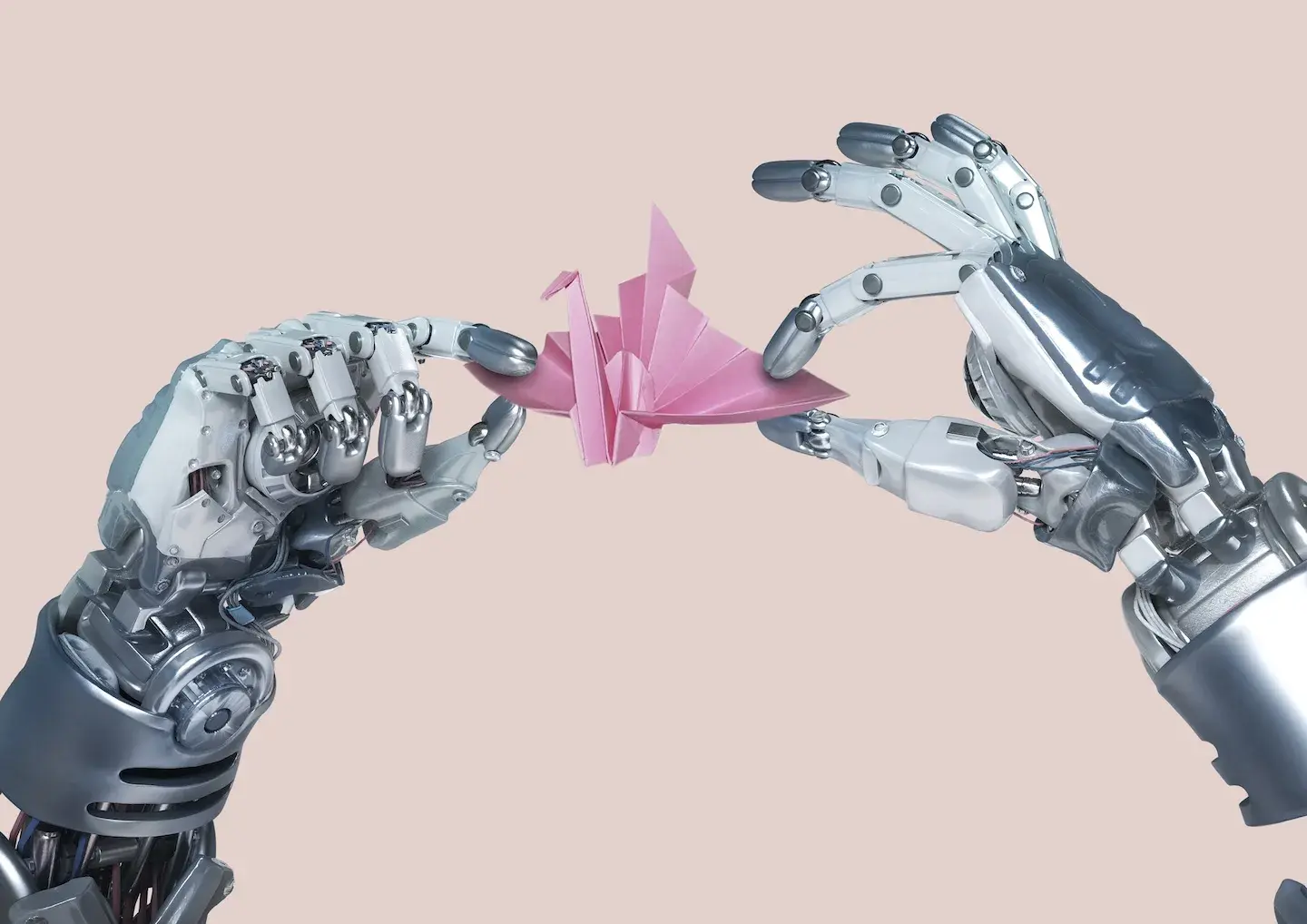

Hear from the experts on applied intelligence, AI, decision management, fraud prevention, customer centricity and more.

Popular Posts

Business and IT Alignment is Critical to Your AI Success

These are the five pillars that can unite business and IT goals and convert artificial intelligence into measurable value — fast

Read more

It’s 2021. Do You Know What Your AI Is Doing?

New "State of Responsible AI" report from Corinium and FICO finds that most companies don’t—and are deploying artificial intelligence at significant risk

Read more

FICO® Score 10T Decisively Beats VantageScore 4.0 on Predictability

An analysis by FICO data scientists has found that FICO Score 10T significantly outperforms VantageScore 4.0 in mortgage origination predictive power.

Read more