Can Machine Learning Build a Better FICO Score?

FICO conducted a research project to see just how much lift unconstrained, state-of-the-art ML techniques might offer over the FICO Score.

FICO has long been a pioneer in the use of machine learning (ML) and artificial intelligence (AI) to help our clients improve decisions in the financial services industry. Our first patent in this field was over 25 years ago regarding the use of neural nets to detect credit card transaction fraud. Machine learning is used throughout the company, not just in our industry-leading consumer fraud solutions, but in solutions ranging from cybersecurity risk detection to adaptive marketing profiles.

When it comes to credit scoring, we combine the power and speed of insights derived from ML with our 25+ years of domain expertise to ensure that the resulting models are highly predictive as well as explainable to clients, regulators and consumers. This explainability is vital. We recently hit a milestone of 250 million consumer accounts in the US that can access their FICO® Scores for free through our FICO® Score Open Access program. It is important that we be able to explain to these consumers in clear and concise fashion why they scored the way they did.

There are a number of challenges associated with ML techniques — particularly state-of-the-art techniques such as random forests and gradient boosted trees — as far as producing fully transparent, interpretable, and palatable models. While building Explainable AI is something that FICO is very heavily invested in, it is still an active area of research (spanning over the last 25 years) and is relatively nascent in the eyes of regulatory bodies.

Still, we are always testing new approaches and analytic techniques in our ongoing quest to enhance the predictiveness of our FICO® Score models. As part of this, we conducted a research project to see just how much predictive lift unconstrained, state-of-the-art ML techniques might bring to the FICO Score. I will present the results at FICO World 2018 — here’s an overview.

The Machine Learning Test

We currently build our FICO® Score models using the Scorecard Module technology within our FICO® Model Builder software package. The output of a model built via Scorecard Module is an interpretable, engineered scorecard. To calculate FICO Scores, we develop systems of segmented scorecards, each tuned to a different sub-population. And just like our scorecards are engineered to be highly predictive and explainable, so are the associated segmentation schemes.

In our research, we rebuilt our latest FICO® Score — FICO® Score 9 — using two state-of-the-art learning techniques: neural nets and gradient boosted trees. We controlled everything else about this experiment: We used the same dataset of millions of consumer credit files that we used to build FICO Score 9, and the same set of hundreds of variables that we used as candidates for inclusion in the FICO Score 9 model.

That’s an important point. We were seeking a truly apples-to-apples comparison of the different modeling techniques versus confounding this analysis with exploration of relative strengths in feature creation. The goal was to understand what kind of improvement in model accuracy could be obtained by exploring ML model architectures with all else held equal, utilizing unconstrained ML techniques.

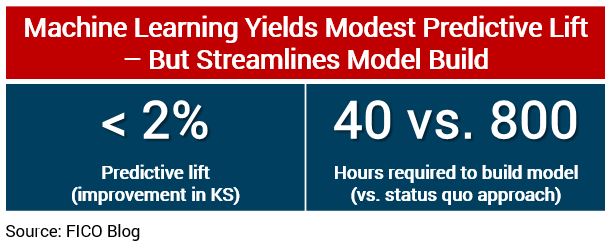

Our findings may surprise you: We identified very modest predictive lift of less than 2% improvement in KS (the Kolmogorov-Smirnov statistic) from using these cutting edge ML techniques. We examined the scores’ effectiveness across different products (auto, mortgage and bankcard), as well as different lifecycle applications (acquisitions and account management). Consistently, we found that the additional lift offered by the ML-driven algorithms was in that modest 1-2% range.

Why Didn’t Machine Learning Give Us More Lift?

So why such little lift? When you’ve mined a relatively stable data source for 25+ years as we have with credit bureau data, and over that time built out very sophisticated systems of scorecards, that approach seems to be able to capture almost all of the signal and complex interactions among variables that even cutting-edge ML techniques can uncover.

While ML may not be the solution to drive an evolutionary improvement in the FICO® Score, we still saw plenty of evidence of the reasons machine learning is going to be a key driver of the future of analytics.

One data point: It took a single analyst a matter of 40 hours to build the ML score that showed slightly improved risk prediction over the FICO® Score 9 models, which took 5 analysts a month to build. We’re talking about an order of magnitude reduction in the number of resource hours required to build out the algorithm. For a score with broad adoption like the FICO® Score, it’s easier to make the case for investing those additional hours to have 5 analysts refine the model. But for R&D efforts aimed at studying new analytic challenges or new data sources where we have less expertise, ML can be a very powerful tool for getting to important insights much faster than conventional methods.

Why doesn’t a 2% improvement — indeed, any improvement — justify replacing the FICO® Score with one built using state-of-the-art machine learning techniques? It might, were it not for the explainability issues noted earlier. With the ML models, we get reduced model transparency, and an inability to ensure that the resulting patterns encoded in the model are fully palatable and intuitive. It would be hard to explain these patterns to consumers or regulators, not to mention lenders.

Research Continues

FICO is heavily invested in ML and its application to fraud detection, cybersecurity, marketing and other business challenges. Within the realm of credit risk, we use ML as a powerful tool for getting to fast insights about the potential of credit risk scores in new markets, or the potential of new data sources we haven’t previously worked with, as well as for horse-racing (aka benchmarking) our Scorecard-based models against the latest and greatest machine learning breeds.

We’re going to continue to research ways to bring the advantages machine learning offers in terms of automation and model effectiveness together with our domain expertise in building palatable and explainable models. Keep an eye on the explainable AI topic, it’s a major focus of ML research at FICO right now.

For more insights on this ML “horse race”, including our findings as relates to comparative predictive strength and model explainability/palatability, please join me at FICO World the week of April 16th.

Popular Posts

Has the Reporting of Rental Data to the Credit Reporting Agencies (CRAs) Increased?

FICO Score 10T includes rental data, but consumers can only experience the benefit of this to the extent that their rental data is reported to the CRAs

Read more

Average U.S. FICO® Score at 716, Indicating Improvement in Consumer Credit Behaviors Despite Pandemic

The FICO Score is a broad-based, independent standard measure of credit risk

Read more

FICO Statement on FHFA and FHA Updates to Credit Score Modernization

FICO supports FHFA’s announcement that the long-anticipated historical data for FICO® Score 10T will be released to the mortgage market.

Read moreTake the next step

Connect with FICO for answers to all your product and solution questions. Interested in becoming a business partner? Contact us to learn more. We look forward to hearing from you.